When Your Release Cycle Outpaces Your Documentation Workflow

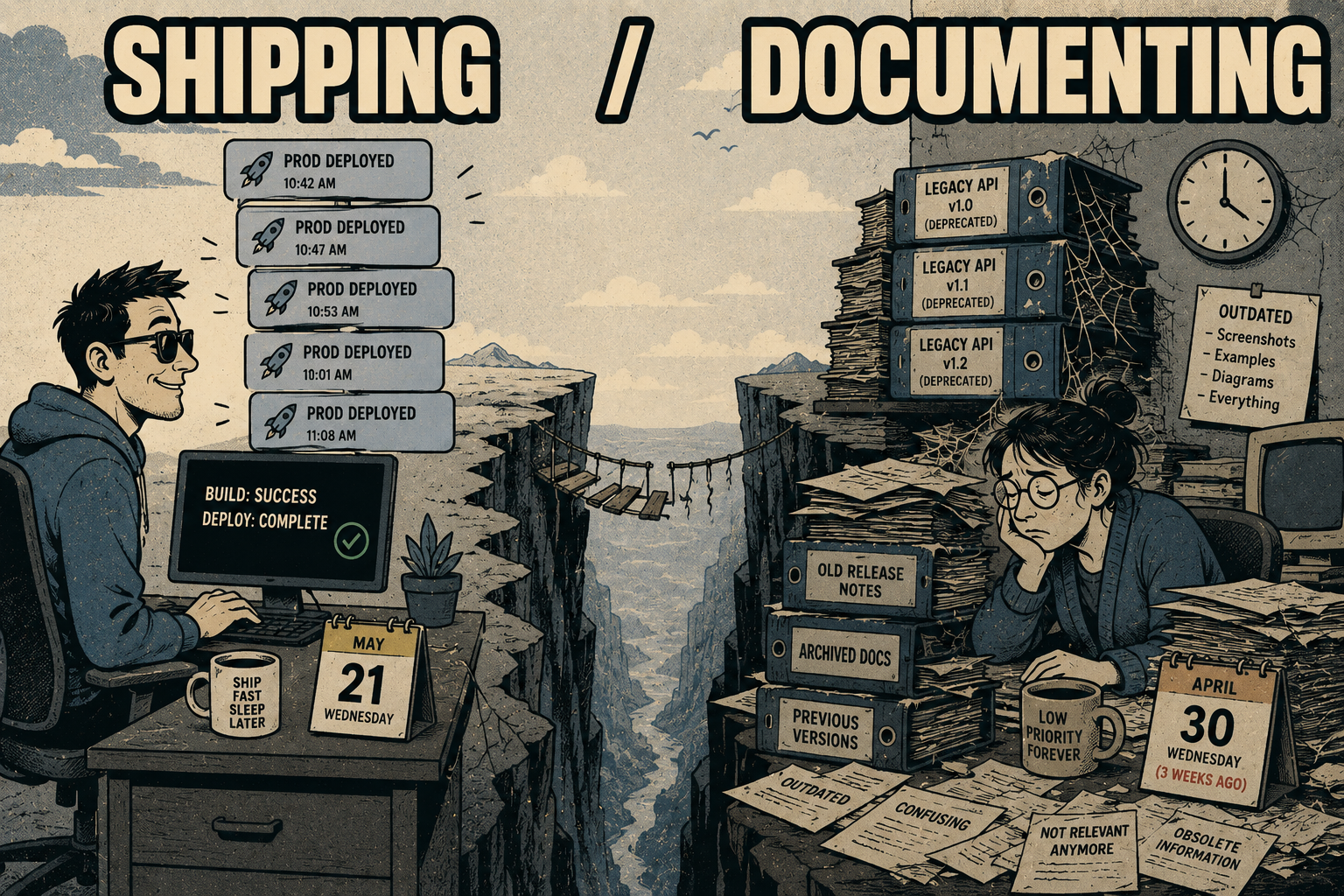

Your team shipped four times this week. A hotfix went out Tuesday without going through the normal review cycle. Two feature flags flipped mid-sprint. Three microservices, owned by different teams, each had their own deployment windows. By Friday, the product your customers are using looks meaningfully different from the product your documentation describes.

That gap is not a writing problem. It's an infrastructure problem.

The teams that are struggling with documentation drift are not struggling because they don't care about docs. They're struggling because the systems they use to produce documentation were designed for a different release cadence. A workflow built around quarterly releases, where a technical writer could interview engineers and draft a changelog over a few days, does not survive contact with daily deploys.

DORA research defines elite-performing engineering teams as those deploying on-demand, sometimes multiple times per day. That's not a niche category anymore. It's the operational baseline for any company competing on product velocity. And the documentation infrastructure most of those teams are running was built for a world that no longer exists.

What Actually Breaks at High Velocity

The failure modes are specific, and they compound.

The most common is changelog drift. Code ships, commits merge, and the changelog sits unchanged because no one's job is to update it in real time. After a week of daily deploys, the changelog describes a product that's three or four releases behind. After a month, it's fiction.

API references go stale in a different way. A parameter gets renamed. An endpoint gets deprecated. A new authentication requirement gets added. The code knows. The tests know. The documentation does not. Research on API drift suggests that a significant share of APIs do not conform to their published specifications, and the root cause is almost always the same: the spec was written once and never updated to match the implementation.

Release notes are their own category of problem. When hotfixes skip the normal review cycle, they often skip the documentation cycle too. The release notes for a given build describe what was planned, not what shipped. Support teams work from those notes. When a customer calls with a problem that was introduced in Tuesday's hotfix, the support team is working from assumptions that predate the issue.

The downstream cost is measurable. Research on documentation accuracy from a survey of contact center professionals found that fewer than one in five organizations rated their knowledge base as "very accurate," and poorly maintained documentation correlates with a 23% increase in support tickets. That's not a small number. That's a structural tax on your support team, paid every month, because documentation wasn't treated as part of the deployment pipeline.

Why the Traditional Workflow Can't Keep Up

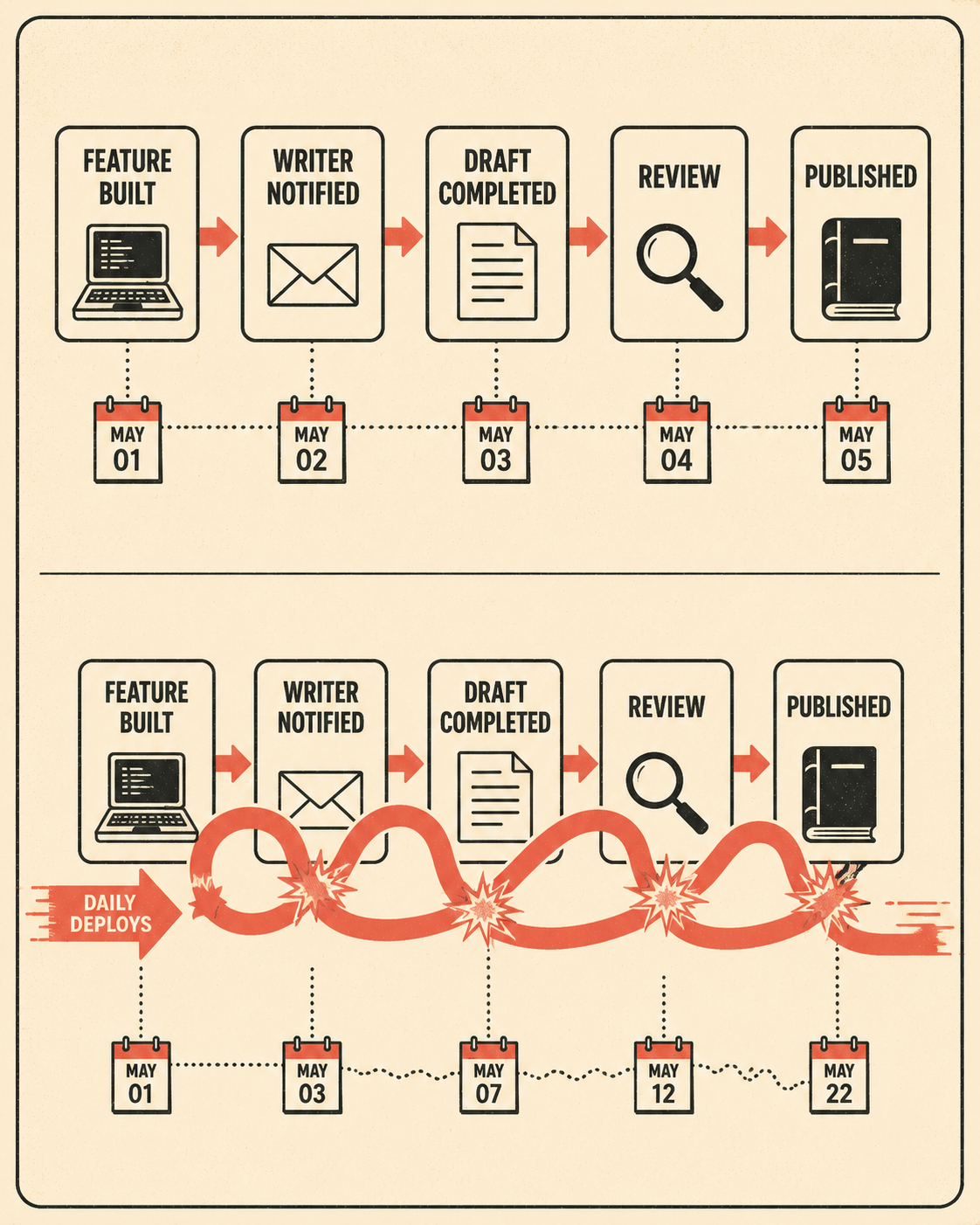

The traditional documentation workflow is sequential and human-dependent. A developer builds a feature. A technical writer learns about it, usually through a ticket or a meeting. The writer drafts the documentation. Someone reviews it. It gets published. The whole cycle takes days, sometimes weeks.

At daily deploy cadence, that cycle is already broken before it starts. The problem isn't that developers don't care about documentation accuracy; it's that keeping docs manually in sync with a fast-moving codebase is physically impossible when you're shipping multiple times a day. By the time the writer finishes the guide for a feature, the feature has changed.

There's a structural problem underneath this, too. Documentation has historically lived outside the codebase. Wikis, Confluence pages, Notion docs. When documentation isn't versioned alongside code, it doesn't update when code updates. Even teams that have adopted a docs-as-code approach, storing Markdown files in the repository, often find that they've automated the publishing pipeline without solving the content problem. The CI/CD system deploys the documentation continuously. It just continuously deploys outdated information.

Stripe's engineering team ran into a version of this when their documentation platform was a monolithic Ruby application with content freely mixing HTML, Markdown, and ERB templates. The problem they identified: content authoring had effectively become software development, with all the complexity and overhead that implies. Their solution was to build a structured authoring system (Markdoc) that separated content from code and made documentation maintainable at scale. The lesson isn't that you need to build your own authoring system. It's that documentation infrastructure has to be designed for the actual release cadence of the team using it.

The Operational Fix

The frame that works here is: generate fast, validate well, publish confidently.

Generation has to be automatic and tied to the engineering workflow. Changelogs should be compiled from commit messages and pull request descriptions, not written by hand after the fact. API references should be generated from the OpenAPI spec that's already being validated against the codebase during the build. Release notes should pull from the same source of truth that the deployment pipeline uses. Recent research on LLM-based commit message generation confirms that automated generation from code diffs can produce accurate, context-sensitive documentation summaries at a quality level that makes human review tractable rather than exhaustive.

The word "tractable" matters. The goal of automated generation is not to eliminate human review. It's to make human review fast enough to happen at the same cadence as releases.

That validation step is where the workflow earns its keep. Spot-checking generated changelogs against actual commits. Reviewing API docs for completeness, especially around edge cases and error states that automated tools tend to underweight. Confirming that release notes match what customers will actually experience, particularly when feature flags mean different users are seeing different versions of the product. Research on AI-assisted documentation workflows shows that LLM-generated documentation is most reliable when it operates on structured inputs from the codebase itself, and most unreliable when it's working from informal descriptions. That's an argument for tying generation to engineering artifacts, not for avoiding automation.

The human role in this workflow is not to write documentation from scratch. It's to manage the output of a system that generates documentation automatically. That's a different job, and it requires fewer people than the traditional model. It also requires different skills: the ability to read a generated changelog and quickly identify what's missing or wrong, rather than the ability to interview an engineer and synthesize a guide from scratch.

What this looks like operationally: documentation is generated as part of the deployment pipeline. A reviewer (a senior writer, a technical lead, or both) checks the output against the actual release. Edge cases and inconsistencies get flagged and corrected. The corrected output gets published. The whole cycle runs at the same cadence as the release.

That's the infrastructure most high-velocity teams don't have. Not because it's technically impossible, but because no one has treated documentation as a first-class part of the deployment pipeline.

Doc Holiday generates release notes, changelogs, and API references directly from engineering workflows, and gives teams the structure to validate and scale that output without rebuilding a large documentation headcount. If your release cycle has already outpaced your documentation workflow, that's where to start.