How to Set up and Maintain a Public Changelog for Your SaaS Product

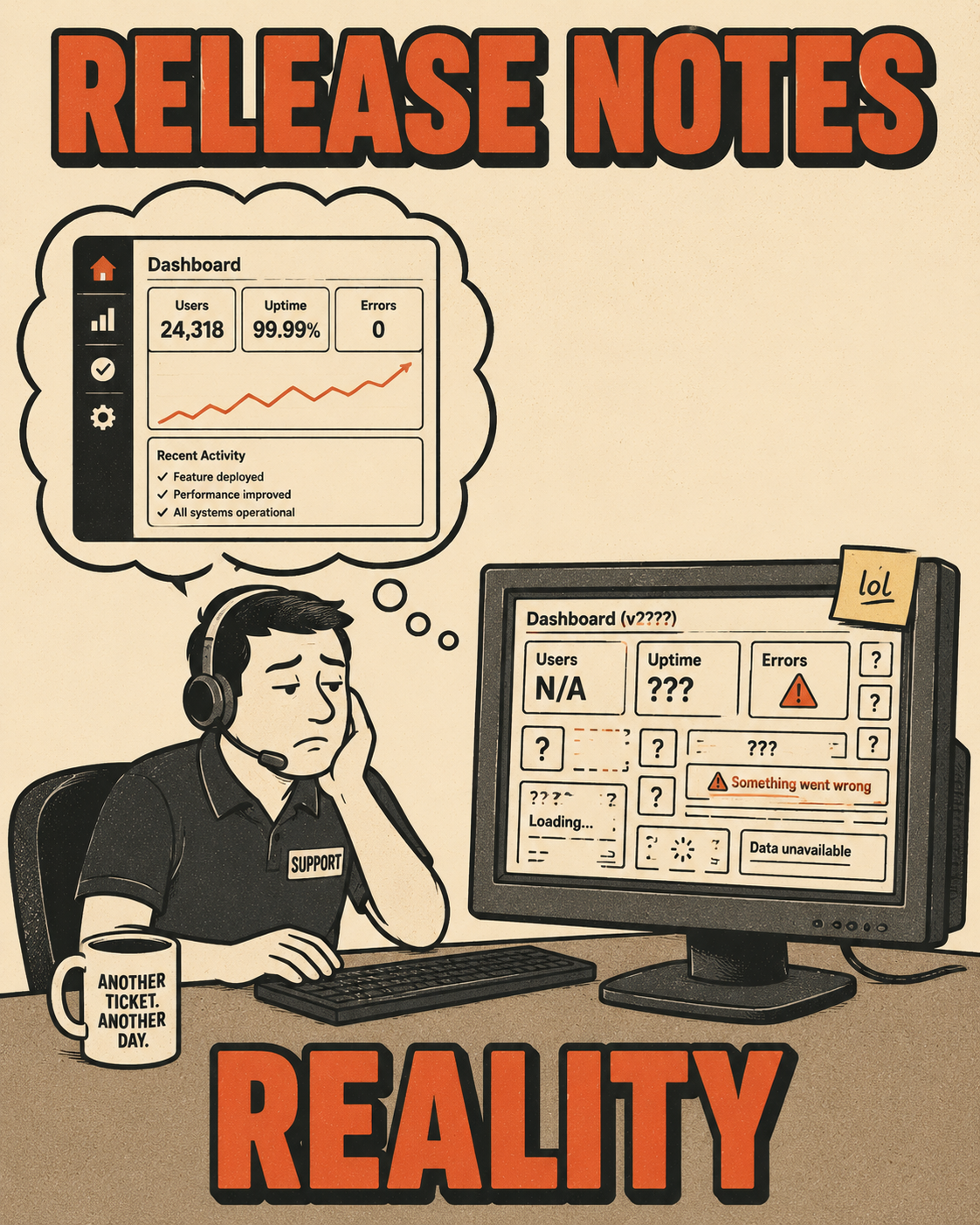

It is Tuesday afternoon, and your team just pushed a hotfix. The code shipped, the tests passed, and the deployment pipeline turned green. Everything worked exactly as designed.

On Wednesday morning, the support team gets a ticket about a broken workflow. They check the release notes. The release notes describe what was planned for the sprint, not what actually shipped in the hotfix. The support team is now working from assumptions that predate the issue.

This is the reality of high-velocity software development. When you ship multiple times a day, the gap between what engineering built and what the rest of the company thinks they built widens with every commit. A public changelog is the bridge across that gap. It is the definitive record of what actually changed, written for the people who have to live with those changes.

We all know we should probably have one. The problem is figuring out how to maintain it without turning a senior engineer into a full-time technical writer.

The Gap Between the Code and the Customer

A public changelog is not a dump of your git commit history. It is a curated, chronologically ordered list of notable changes made to a product over time.

Companies publish them for a few reasons. Transparency builds trust. When users can see a steady cadence of improvements, they feel like the product is alive and actively maintained. It also reduces the support load. When a user encounters a bug, a quick check of the changelog can confirm if it is a known issue that was just fixed, or a new problem entirely.

But the most compelling reason is competitive positioning. A well-maintained changelog signals competence. It shows that you are shipping a product, not just shipping code.

The Spectrum of Approaches

There are a few ways to approach this, and the tradeoffs are real.

On one end, you have the manual Markdown file in a repository. This is the docs-as-code approach. It is cheap, it lives next to the code, and developers understand it. The downside is that it requires discipline. If no one enforces the update, the file rots. Squarespace's engineering team documented their experience with exactly this pattern: integrating documentation into the same pull request workflow as code reduces friction, but it does not eliminate the need for someone to actually write the content.

In the middle, you have dedicated changelog tools like LaunchNotes, Beamer, and Headway. These platforms offer hosted pages, email notifications, and in-app widgets. They are great for visibility, but they introduce another tool into the stack. Someone still has to log in and write the updates.

On the far end, you have fully automated systems that pull from issue trackers, deployment pipelines, and commit messages to generate the log automatically. This sounds ideal, but it often results in a changelog that reads like a machine wrote it. "Fixed null pointer exception in data validation" is not a useful update for a customer.

GitHub's built-in release notes feature is a good example of what automation can and cannot do. It will generate a list of merged pull requests and contributors automatically, and you can configure it to categorize changes by label. What it cannot do is decide which of those pull requests actually matters to a user, or translate "refactor authentication middleware" into something a customer cares about.

What Actually Belongs in the Log

Not every commit needs to be in the public changelog.

Internal refactoring, dependency updates, and minor test tweaks belong in internal release notes. The public changelog should focus on what affects the user experience. The distinction matters because conflating the two creates a changelog that is either too noisy to read or too sparse to be useful.

If your audience is technical, be precise. Stripe's changelog is a good model here: entries are organized by API version, breaking changes are flagged clearly with migration paths, and nothing is vague. If your audience is less technical, focus on the benefit. "Reduced API response time by 35% for large datasets" is better than "Improved API performance."

The Operational Reality of Maintaining It

The hardest part of a public changelog is keeping it alive.

Most changelogs die after three months because the maintenance burden becomes too high. The team realizes that writing good release notes takes time, and they do not have spare capacity. Usually it is a failure of workflow design, not commitment.

Who actually writes these things? In small teams, it is often the product manager. They understand the changes and the user impact. As the company grows, this responsibility might shift to a technical writer or a dedicated product operations role. But regardless of who writes it, the process usually involves chasing down engineers to figure out what actually shipped. Engineers are not telling technical writers half of the changes they are making, which contributes to documentation drift and outdated content.

There are a few other common failure modes worth naming. Changelogs written in jargon engineers understand but users do not. Entries that over-promise on timelines ("coming soon" that never arrives). A backlog of undocumented releases that grows until the team gives up trying to catch up.

As release velocity increases, these problems compound. A team shipping twice a week has a manageable documentation problem. A team shipping multiple times a day has a structural one.

How to Stop Writing Them By Hand

The solution is to change the workflow so that the raw material is generated automatically, and human effort is reserved for the judgment calls that actually require a human.

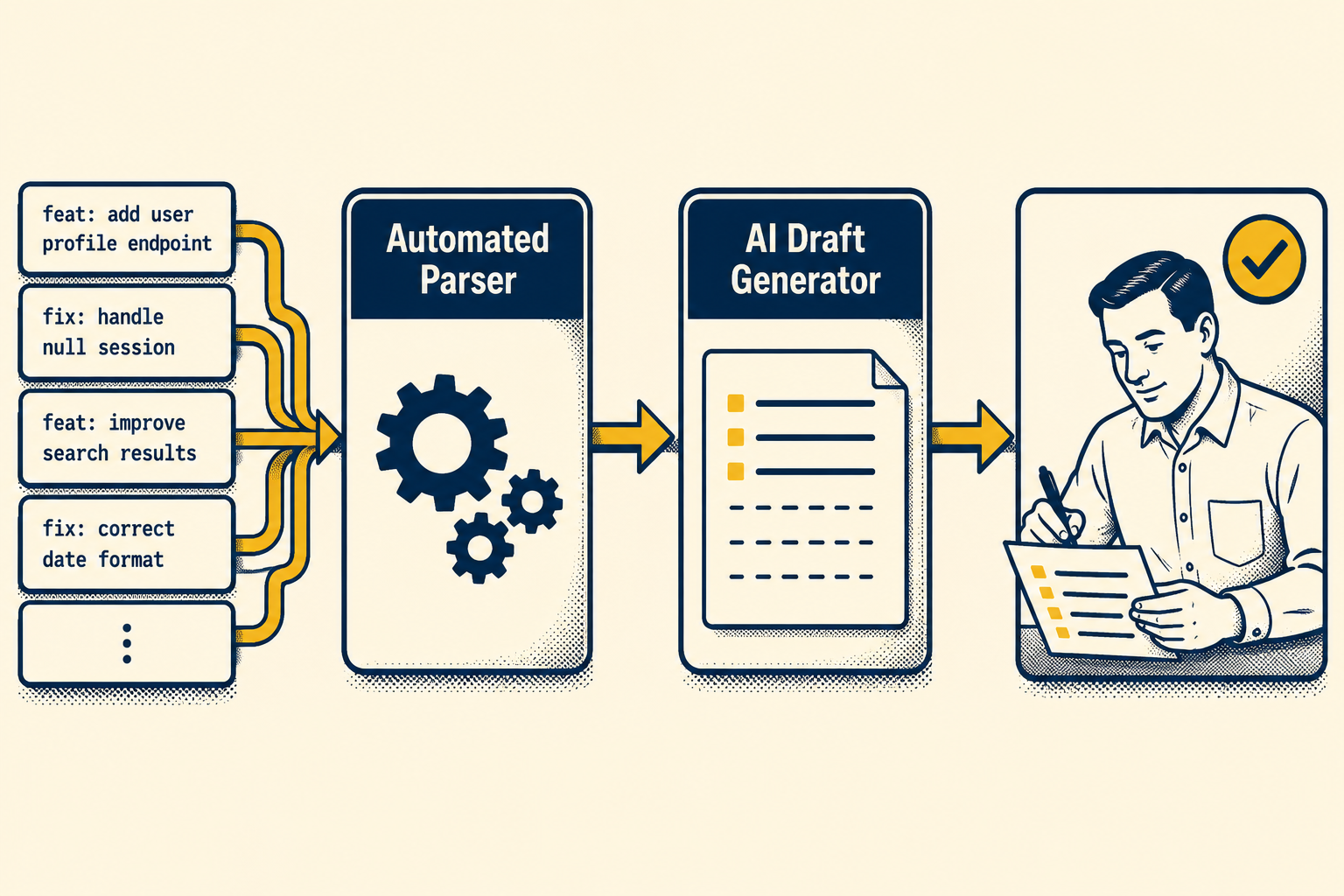

Structured commit conventions make this possible. If engineers prefix their commits with feat:, fix:, or BREAKING CHANGE:, automated tools can parse that history and generate a draft changelog. The Conventional Commits specification is lightweight enough that most engineering teams can adopt it without significant friction, and it integrates directly with tools like semantic-release and git-cliff that generate changelogs automatically.

But raw commits still need translation. AI can help bridge this gap. By analyzing code diffs and commit messages, AI can generate a first draft that translates technical changes into user-facing language. The human role shifts from writing from scratch to validating and editing. A product manager or senior writer reviews the generated draft, ensures the tone is right, and confirms that edge cases are covered.

This is what a well-functioning public changelog system looks like in practice. It is fast to update because the first draft arrives automatically. It is consistently maintained because the update is part of the deployment pipeline, not a separate task that competes for attention. It is written for the user's perspective because a human with product context reviews it before it goes out. And it scales as release velocity increases because the bottleneck is validation, not writing.

That is the model Doc Holiday is built around. It generates structured changelog content directly from engineering workflows, pulling from your codebase, tickets, and specs to draft release notes that reflect what actually shipped. The validation layer is what makes it work for lean teams: instead of rewriting every release from scratch, a reviewer confirms, adjusts, and publishes. The system handles the volume. The human handles the judgment.