The Engineering Leader's Guide to Sprint Summaries Stakeholders Actually Read

The sprint closes. The board clears. Someone on the engineering team sends out the summary: a list of ticket numbers, a few feature names, maybe a brief paragraph about what got merged. It lands in the inboxes of the sales director, the customer success lead, and the CEO.

Nobody reads it.

Not because they don't care about what shipped. They do. They just can't extract what they need from what they've been given. The summary was written for engineers, and everyone else is left to decode it.

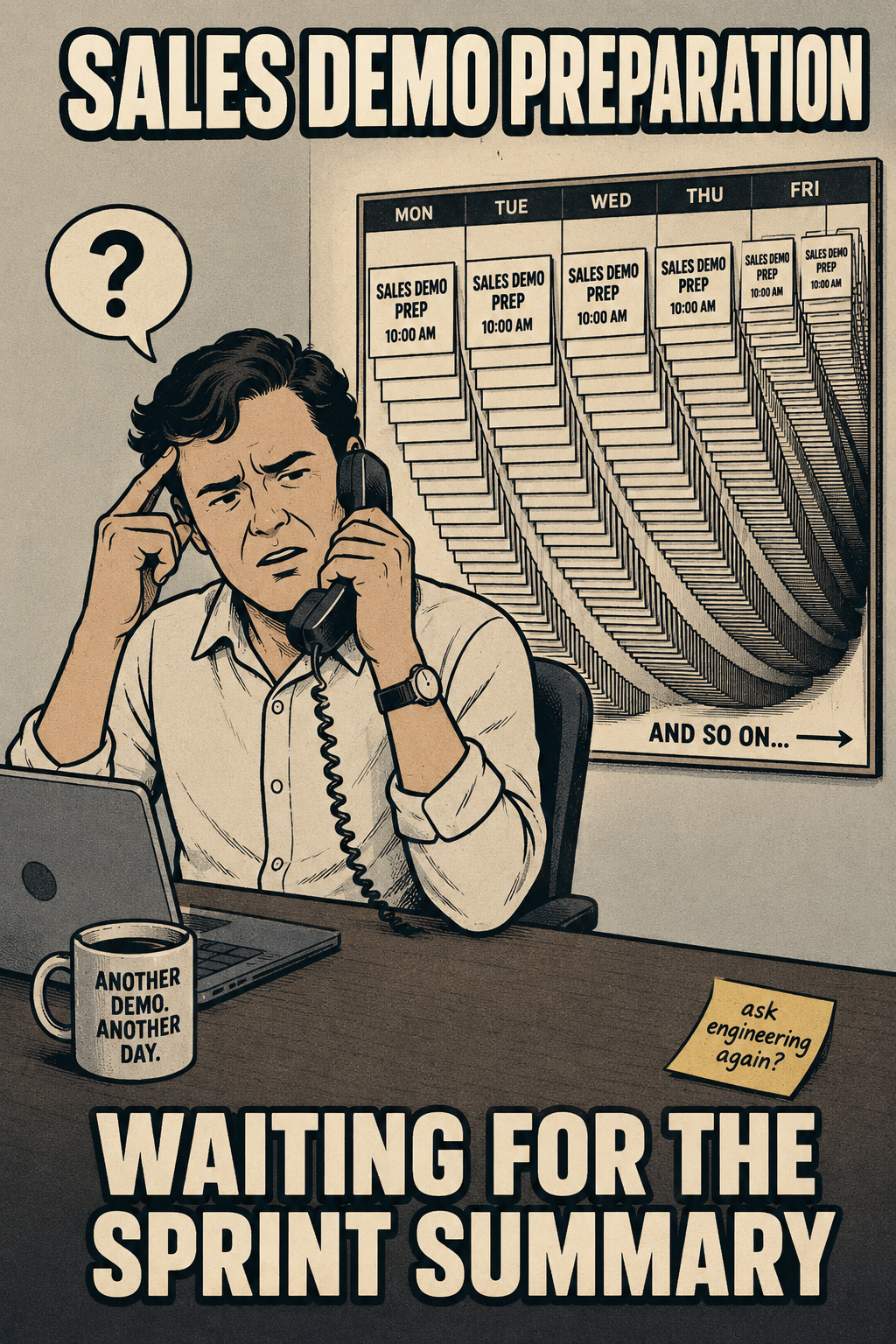

This is the sprint communication gap, and it is more expensive than most teams realize. Sales reps go into demos without knowing what new capabilities they can position. Customer success teams get blindsided when users ask about changes. Executives schedule recurring "what did we actually ship?" meetings that burn an hour every two weeks and answer nothing definitively. Research on coordination in software teams has found that when information doesn't flow cleanly between engineering and the rest of the organization, the downstream effects show up in productivity losses and increased failure rates, not just in mild confusion.

The fix is not more meetings. It is better translation.

What Your Sprint Summary is Actually Saying

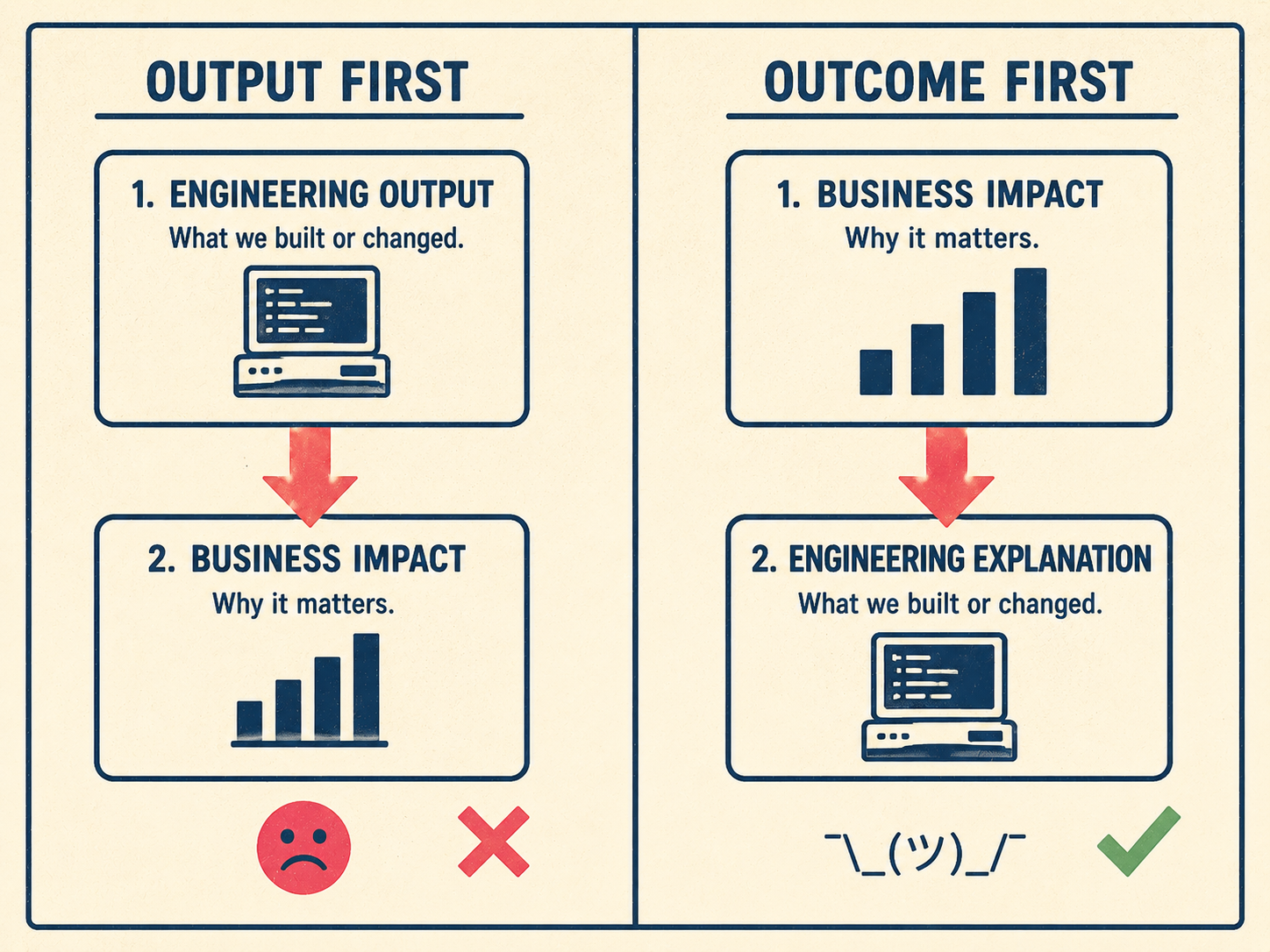

Most sprint summaries are output documents. They record what the team did. "Implemented OAuth 2.0 token refresh." "Refactored the data pipeline to reduce latency." "Resolved 14 bugs in the payments module."

These are accurate. They are also useless to anyone who doesn't already know what OAuth 2.0 token refresh means for a customer's daily workflow.

The 2020 Scrum Guide describes the sprint review as a working session to inspect the outcome of the sprint and determine future adaptations. Outcome, not output. The distinction matters because outcomes are what stakeholders can act on. "Customers no longer need to re-authenticate every 24 hours" is an outcome. "Implemented OAuth 2.0 token refresh" is an output. One of those sentences belongs in a stakeholder summary.

An empirical study of release note production and usage across 32,425 GitHub releases found significant discrepancies between what release note producers think they're communicating and what readers actually take away. The producers assume technical context that readers don't have. The readers want impact information that producers don't think to include. Both groups are operating in good faith. The document just isn't built for the audience that needs it most.

The fix starts with a simple reframe: lead with what changed for the customer or the business, then explain what the engineering team did to make it happen. Not the other way around.

Anyway.

How to Write One That People Actually Read

The most useful sprint summaries are segmented by audience. Not every stakeholder needs the same information, and a document that tries to serve everyone usually serves no one well.

Sales needs competitive positioning. What can they now say that they couldn't say before? Customer success needs support implications: what questions will users ask, and what's the right answer? Executives need strategic progress markers: how does this sprint move the product closer to the goals the board cares about?

Google's technical writing curriculum frames this as the core equation of good documentation: what the audience needs to know minus what they already know. The gap is what you're writing. For a sales rep, the gap is "what does this feature mean for the deals I'm working?" For the CEO, it's "are we on track?" For customer success, it's "what do I tell users who call in tomorrow?"

A practical template that covers all three:

- What shipped: One to three plain-language sentences describing the change, written for someone who doesn't read code.

- Why it matters: The customer or business impact. Specific, not vague.

- What to tell customers: Literal talking points for sales and CS. This is the section most teams skip, and it's the section that generates the most downstream value.

- What's next: One sentence on the upcoming sprint's focus, so stakeholders can calibrate expectations.

The table below shows how the same sprint output translates differently depending on who's reading it.

Timing matters too. A sprint summary that arrives three days after the sprint closes is less useful than one that arrives the same day, through the channel the stakeholder actually checks. Executives often prefer email. Sales and CS teams tend to live in Slack. Research on team communication consistently finds that the medium shapes whether information gets used, not just whether it gets sent.

The Part That Doesn't Scale

Here is the problem with everything above: it takes time to write.

A good, segmented sprint summary for a two-week sprint might take two to three hours to produce well. That is two to three hours of a VP of Engineering's or a senior technical writer's time, every sprint, on a task that is important but not technically demanding. Most teams either skip it, produce a low-quality version under deadline pressure, or rotate the responsibility until it quietly stops happening.

Research on automated pull request description generation found that developers frequently omit PR descriptions entirely because of the time and cognitive load required. The same dynamic plays out at the sprint level. The information exists in the commit logs, the ticket metadata, and the PR descriptions. The bottleneck is the translation step.

This is where automation becomes a force multiplier. Studies on automated release note generation, including the ARENA system developed at IEEE, demonstrated that systems extracting changes from version control and issue trackers can produce summaries that closely approximate what developers would write manually. The Ascend.io engineering team described the same pattern in practice: raw commit messages don't help stakeholders understand what changed, but an AI pipeline that harvests commits, generates a summary, and routes it for human review before publication produces consistent, useful output at a fraction of the manual effort.

The key word is review. The automation handles the drafting. A skilled writer or engineering leader handles the framing, the audience segmentation, and the accuracy check. That division of labor is what makes the system work.

Most teams know what a good sprint summary looks like. They just don't have a system that produces one reliably, sprint after sprint, without pulling senior people away from higher-leverage work.

Doc Holiday generates sprint summaries and release notes from the same data engineering already produces (commit logs, PR descriptions, ticket metadata) and gives teams a structured workflow to review, customize, and publish updates for each audience. The drafting happens automatically. The judgment stays with the people who have it.