What is a Release Train in Software Engineering?

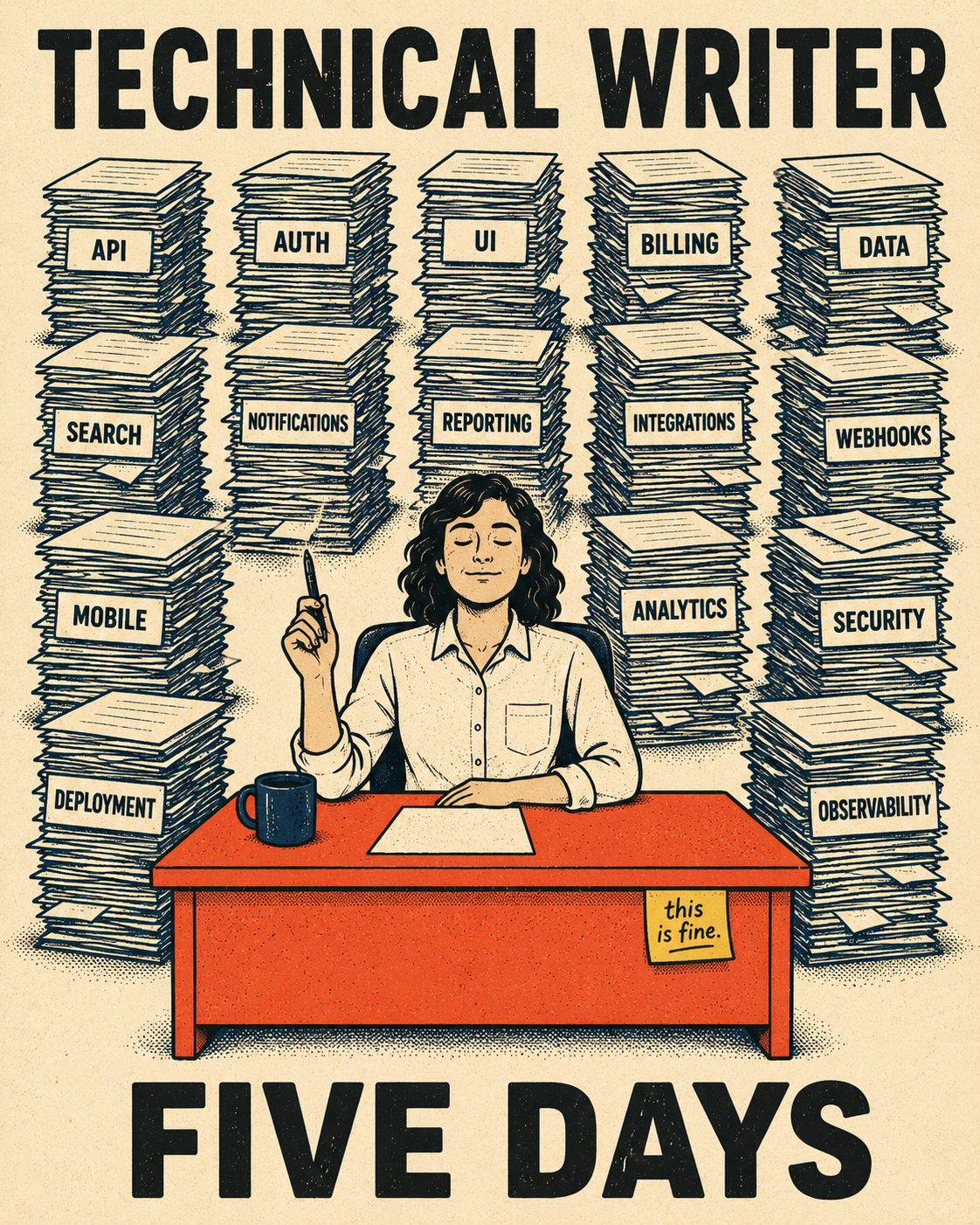

It's the Thursday before a quarterly release. Slack has been quiet all sprint, and then, in the span of about four hours, fifteen different teams drop their changes into the shared documentation channel. API updates, a new authentication flow, three deprecated endpoints, a UI overhaul, and a handful of "minor" changes that turn out to be anything but. The code freeze is Monday. The release goes out Wednesday. The technical writer has five days to document all of it.

That scenario is not a planning failure. It is the release train working exactly as designed.

A release train is a fixed-cadence delivery model where multiple teams synchronize their releases into periodic deployments. Instead of shipping features the moment they're ready, teams align their work to a shared schedule — often every two weeks, monthly, or quarterly — and coordinate a single go-live. The term comes from SAFe (Scaled Agile Framework), which formalizes the concept as the Agile Release Train, but the pattern itself predates SAFe and shows up across methodologies wherever coordination cost is high.

The core mechanics are straightforward: a feature freeze date closes the window for new work, an integration period lets teams merge and stabilize their changes, a collective testing phase catches cross-team regressions, and then everything ships together. The train departs on schedule, whether or not every passenger made it to the platform.

Organizations adopt this model because it solves a real problem. When dozens of teams are building interdependent software, continuous deployment creates coordination chaos. Every merge is a potential conflict. Every deployment is a risk to adjacent systems. A shared cadence turns that chaos into something manageable: predictable planning cycles, structured dependency management, and a communication rhythm that enterprise customers can actually plan around. After adopting SAFe and release trains, Johnson Controls was able to ship new releases two to four times more often than before. Cisco saw a 40 percent decrease in defects after implementing SAFe and continuous delivery.

The release train is especially common in B2B SaaS, regulated industries, and large platform companies. Enterprise customers often need to schedule upgrades, train their teams, and coordinate with their own IT departments before a new version lands. A quarterly cadence gives them a predictable window. It also consolidates communication: instead of a drip of weekly update emails that nobody reads, customers get one substantive announcement they can actually act on.

How the Train Actually Works

The backbone of the release train model is the Program Increment (PI), a fixed timeframe (usually eight to twelve weeks) during which multiple teams plan, build, and integrate their work. PI Planning is a two-day event where all teams align on goals, surface dependencies, and commit to a shared set of objectives for the increment. Think of it as a synchronized kickoff where everyone agrees on what the train is carrying before it leaves the station.

Within the PI, teams work in shorter sprints (usually two weeks) and converge toward a shared integration point at the end. The final sprint is often an "Innovation and Planning" sprint, which is where integration testing, documentation, and release preparation happen. This is also where the documentation bottleneck becomes acute.

The release train pattern exists outside SAFe too. Many organizations run informal versions of it: a monthly release window, a code freeze two weeks prior, a shared staging environment for integration testing. The mechanics vary, but the structure is the same. Multiple teams, one departure time.

What changes when teams adopt this model is mostly about coordination overhead. Release trains require explicit dependency management — teams have to surface what they need from each other early enough to resolve it before the freeze. They require shared tooling for integration and testing. And they require a communication function that can translate the output of fifteen engineering teams into something coherent for customers.

That last requirement is where things get complicated.

When the Train Leaves, Documentation is Still on the Platform

The release train's predictability is a genuine advantage. The documentation problem it creates is a genuine cost.

When features ship continuously, documentation can trickle out alongside them. A writer picks up a feature, documents it, and moves on. The work is distributed across time. With a release train, that distribution collapses. Everything ships at once, and everything needs documentation at once.

A 2022 mapping study on documentation in continuous software development, published in Information and Software Technology, found that documentation is frequently out of sync with software, treated as waste, and deprioritized under short-term delivery pressure. Those tendencies are structural, not individual. They get worse when the delivery window compresses.

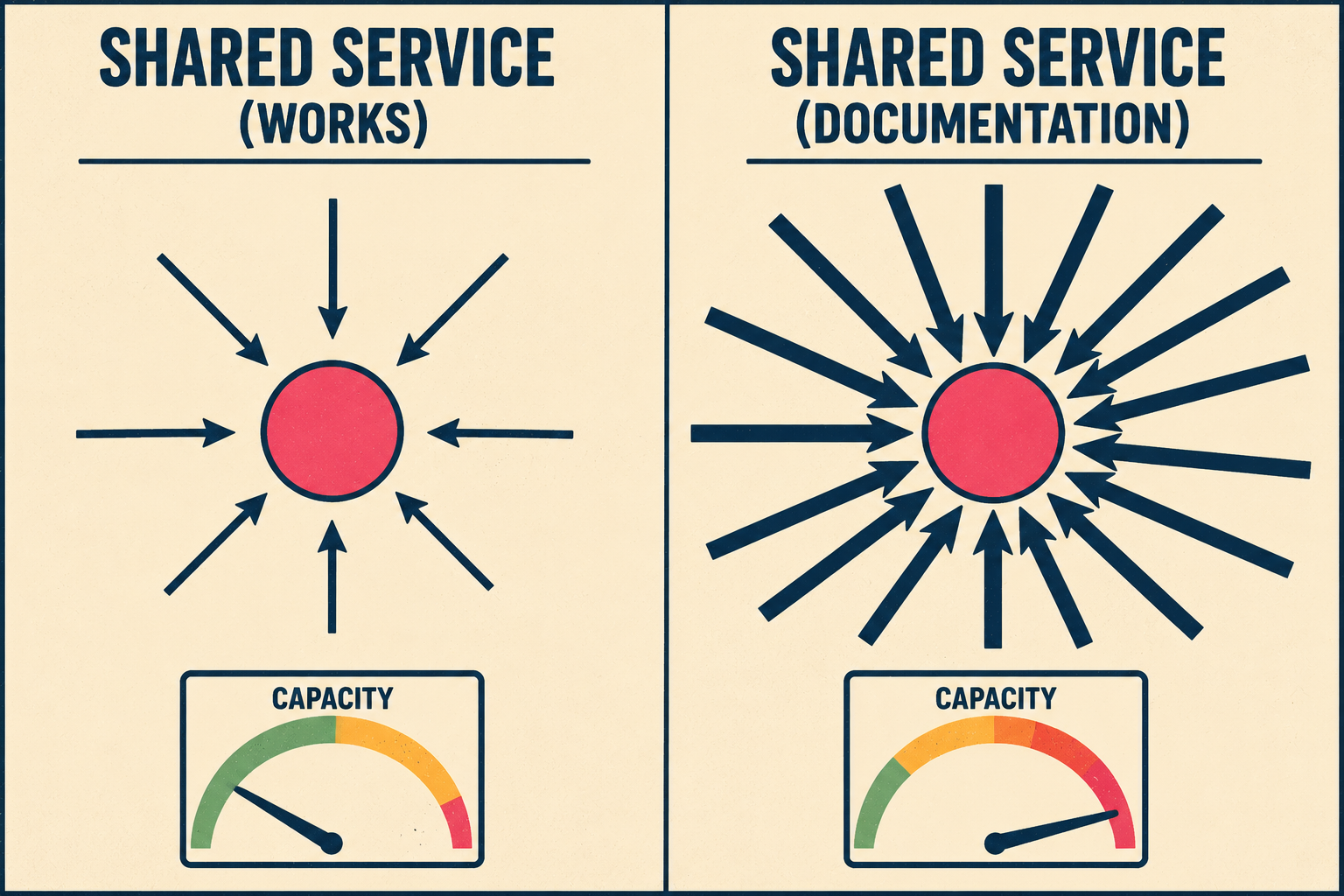

The technical writing team in a SAFe organization is typically classified as a Shared Service — a specialty function that supports multiple Agile teams without being embedded in any of them. That structure makes sense for functions that are needed occasionally. It works less well for documentation, which is needed for nearly every feature. A small writing team serving fifteen engineering teams during a single release window is not a shared service. It is a single-lane bridge with fifteen lanes of traffic.

The result is predictable. Documentation gets compressed into the final sprint. Writers work from incomplete information because engineers are still finishing features. Review cycles get skipped. Release notes go out thin or late. And the customers who were supposed to benefit from the predictable communication window get an announcement that doesn't actually explain what changed.

Poor documentation costs mid-sized engineering teams up to $2 million annually in lost productivity, delayed projects, and rework. That number doesn't show up on a single line item. It shows up in support tickets, in onboarding time, in the senior engineer who spends an afternoon explaining an API change that should have been in the changelog.

The release train doesn't cause this problem. It concentrates it.

The part that usually gets skipped

The obvious fix is to start documentation earlier. Get writers into PI Planning. Assign documentation tasks to sprints. Build the release notes incrementally instead of scrambling at the end.

That helps. It doesn't solve it.

The underlying issue is that documentation in a release train environment requires aggregating output from many teams, maintaining consistency across all of it, and hitting a hard deadline. That is a throughput problem, and throughput problems don't get solved by starting earlier. They get solved by changing the ratio of inputs to outputs.

This is where automated documentation generation changes the math. The model is not "AI writes the docs and humans disappear." The model is: AI generates first drafts from code commits, pull requests, and ticketing data; a writer reviews the output for accuracy, adds context where needed, and ensures consistency across all teams' contributions. The train still ships on schedule, but the documentation is ready at departure instead of catching up afterward.

Research on LLM-powered release note generation shows that systems trained on commit and pull request data can produce output that ranks first for completeness and organization compared to manually written release notes (Heshyan et al., 2025). The Stack Overflow developer survey found that developers spend more than 30 minutes a day searching for solutions to problems that good documentation would have answered — and that AI-assisted documentation, with human oversight, is the most practical path to closing that gap. A case study at a large European bank found that LLM-generated changelogs improved continuously as engineers reviewed and approved the output, creating a feedback loop that made the system more accurate over time.

The governance question is real. AI-generated documentation needs human validation, especially for accuracy and context. But that validation is a fundamentally different task than writing from scratch. A writer reviewing structured output from fifteen teams can maintain quality at the train's pace. A writer drafting from scratch cannot.

The release train's promise is predictable delivery. That promise only holds if documentation ships with the code, not after it. Doc Holiday generates release notes, changelogs, and API references synthesized from the engineering work happening across all teams on the train, giving the writer a structured output to validate and manage rather than a blank page and a Monday deadline.