How to Automatically Update Documentation When Code Changes

If you run an engineering organization of any meaningful size, you have probably noticed a recurring pattern. A team ships a new feature, merges the code, and celebrates the release. Three weeks later, a customer files a support ticket because the API endpoint they are trying to use does not match the documentation. The documentation still describes the old behavior. The code changed, but the words describing the code did not.

The operational reality is that code changes constantly, while documentation updates sporadically. This gap compounds over time, creating version drift, stale API references, and customer confusion. The fix is not to tell your engineers to "just write better docs." That is a workflow problem masquerading as a behavioral one. To fix it, you have to reduce the latency between a code merge and a documentation publish.

This does not mean eliminating human judgment. It means collapsing the feedback loop so that the machine handles the structured, repetitive updates, and the humans handle the nuance.

Anyway. We have all agreed that stale documentation is a problem, and we seem to be having trouble figuring out how to actually automate the fix without building fragile, bespoke scripts that break every time someone changes a repository name.

What "Automatic" Actually Means

When we talk about automating documentation, we need to be precise about what is actually being generated. There is a meaningful difference between tools that surface code changes for human review and tools that generate updated documentation directly from structured inputs.

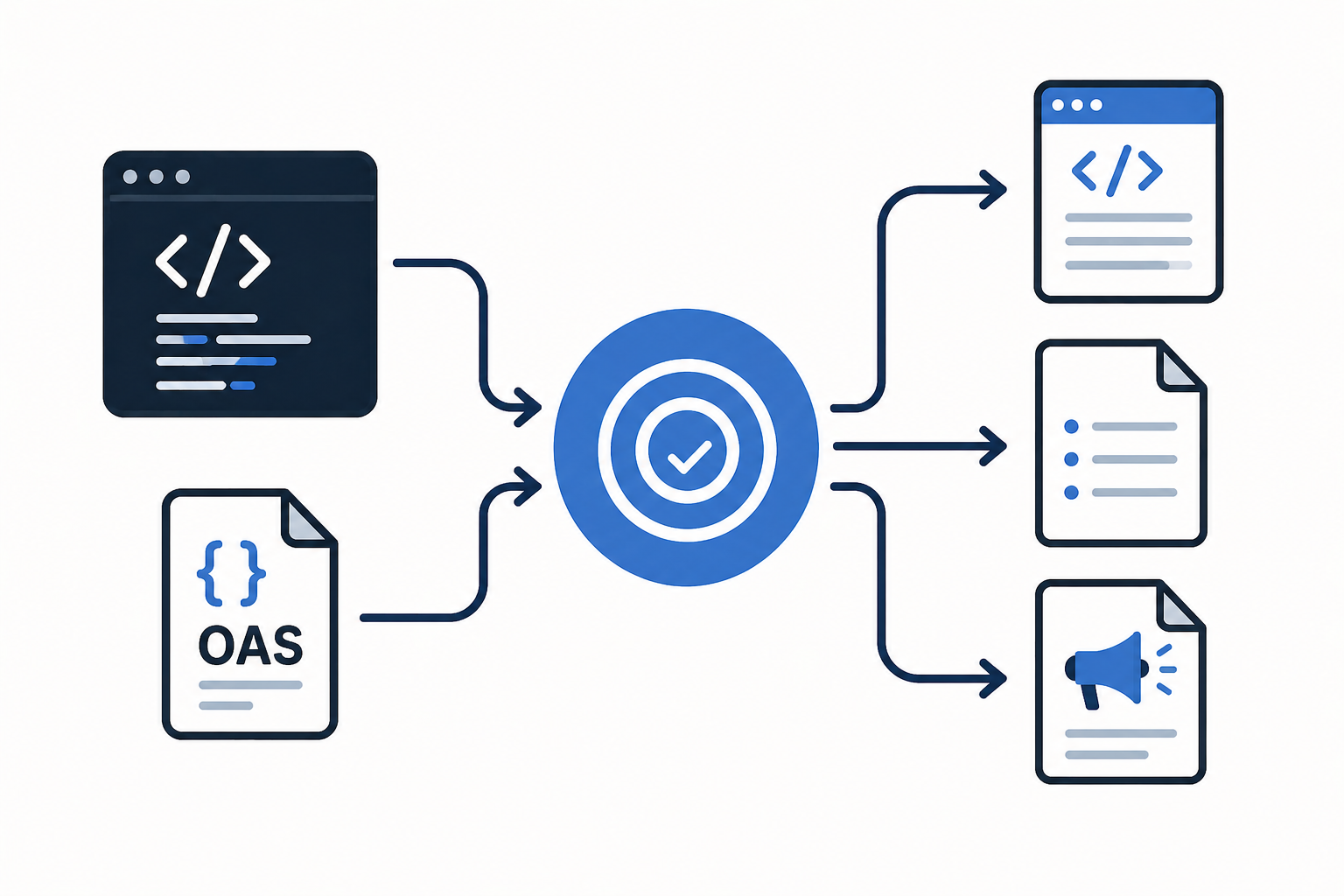

Some documentation types can be generated reliably from code artifacts. API references, changelogs, and interface specifications are excellent candidates for full automation. If you have an OpenAPI specification, you can generate your API documentation directly from it, and the documentation will always match the implementation because both derive from the same source. If your team uses Conventional Commits, you can automatically generate release notes and changelogs from your Git history. These are structured inputs that map cleanly to structured outputs.

Other documentation types still require human interpretation. Conceptual guides, troubleshooting workflows, and architecture decisions cannot be fully automated because they require context that does not exist in the codebase. An automated system can tell you what changed in an API endpoint, but it cannot tell you why the engineering team chose to deprecate the old endpoint in favor of a new one. A 2024 study on design-implementation-documentation drift found that the gap between what code does and what documentation says it does is a persistent, measurable problem in software projects, and that the gap widens precisely in the areas where human context is hardest to encode.

Organizations that successfully implement documentation automation understand this distinction. They automate the API references and the changelogs, freeing up their technical writers to focus on the high-value content that requires judgment.

The Technical Mechanics

To actually build this, you need infrastructure that listens for changes, extracts the relevant information, and publishes the result.

The most common approach is the docs-as-code model, where documentation is treated with the same rigor as software. This involves storing documentation in version control alongside the code, and using CI/CD pipelines to automate the publishing process. Cloudflare, for example, runs their entire documentation through a public GitHub repository and GitHub Actions, which validates links, builds the site, and deploys changes on every merge.

When a developer merges a pull request, a webhook or CI/CD trigger fires. This trigger kicks off a pipeline that parses the code for changes. If the team is using OpenAPI, the pipeline runs a linter to validate the specification, generates the HTML documentation using a tool like Redoc or Swagger UI, and deploys the updated site. Automating this with GitHub Actions requires a workflow file that watches for changes to the spec file and triggers the build and deploy steps automatically.

If the team is generating a changelog, the pipeline parses the commit messages. Tools like semantic-release can read commits formatted according to the Conventional Commits specification and automatically generate a Markdown changelog, determine the correct semantic version bump, and publish a new release. The OpenAPI Initiative's own best practices guide recommends treating OpenAPI descriptions as first-class source files committed to version control, participating in CI/CD processes from the start.

This infrastructure integrates cleanly with existing documentation platforms. Whether you are deploying to GitHub Pages, Netlify, or a dedicated developer portal like Backstage, the CI/CD pipeline handles the heavy lifting. The Squarespace Domains engineering team describes exactly this workflow in their docs-as-code writeup: every documentation change flows through the same pull request review process as code, and merging to the main branch automatically updates the live documentation. The engineering work required to set this up is not trivial, but it is a one-time investment that pays compounding dividends.

The Quality Control Layer

There is a risk here, of course. If you automate the publishing pipeline, you automate the ability to publish mistakes at scale. Organizations have to prevent automated systems from publishing incorrect, incomplete, or misleading content.

The best implementations treat automation as a first draft that gets refined, not a final publish that runs unsupervised.

Automated schema checks are the first line of defense. If an OpenAPI specification is invalid, the CI/CD pipeline should fail the build before the documentation is ever generated. But automated checks can only catch structural errors. They cannot catch semantic errors or missing context.

This is why human review remains critical. Human-in-the-loop automation is a workflow design approach where automated systems handle routine tasks, but humans are intentionally inserted at key decision points to validate the output. In a documentation pipeline, this looks like a staging environment where technical writers review the generated API reference before it goes live, or a review queue where a writer approves the automated changelog before it publishes.

Skilled technical writers are not the bottleneck being removed by automation. They are the quality gate being elevated. They validate the output, catch edge cases, and ensure consistency across generated and handwritten content. Research on automated code documentation generation using LLMs confirms that automated systems produce high-quality output on structured, well-defined tasks, but that quality degrades when context is ambiguous or missing. The human reviewer is what converts "good enough for a first draft" into "accurate enough to publish." Managed automation scales. Unmanaged automation accumulates errors.

Where to Start

The decision framework is straightforward: start with high-volume, low-ambiguity updates.

Release notes generated from tagged commits are a perfect starting point. The input is structured, the output format is predictable, and the update frequency is high. API reference docs pulled from OpenAPI specs are another excellent candidate. Changelogs derived from issue trackers or commit histories are a third.

Conceptual guides, tutorials, and architecture overviews are poor candidates for full automation. They lack the structured inputs required for reliable generation. However, they can still benefit from automated scaffolding. An automated system can generate a draft of a new tutorial based on a recently merged feature, which a technical writer then refines and expands. The 2023 survey on document automation architectures distinguishes between document assembly (reliable, automatable) and document generation (requires human judgment), which maps almost exactly to this distinction between reference documentation and conceptual documentation.

Prioritize based on update frequency, consistency requirements, and available structured inputs. Automate the things that change often and have clear rules. Leave the rest to the humans.

The Operational Result

An organization that has automated the generation of release notes, changelogs, and API references has eliminated the most time-sensitive documentation bottleneck. Their technical writers are no longer spending hours manually updating parameter descriptions or formatting release notes. Shopify's engineering team has written about the productivity impact of treating documentation as a product: when the infrastructure is right, documentation becomes a natural part of the development process rather than an afterthought.

Those writers can now focus on high-value content that requires judgment. Migration guides, best practices, troubleshooting workflows. The automation handles the repetitive, structured updates, while the humans handle the complex, contextual work.

This is the operational model that Doc Holiday enables. Doc Holiday generates release notes, API documentation, and changelogs directly from engineering workflows. It drafts the output from code commits and product specs, and provides the validation structure that lets a small team manage and scale that output without rebuilding a large headcount. The AI generates the first draft; a senior writer reviews it for accuracy in a dashboard; edge cases get flagged; patterns get fed back. It is the infrastructure that makes continuous documentation actually work.