How to Automatically Trigger Documentation Updates When a Gitlab Merge Request is Merged

If you ran a mid-sized engineering organization, and a million Yale-educated technical writers showed up at your corporate headquarters offering you their services for $5.92 an hour, you would have four options. You could hire them all and assign one to every developer. You could hire a few and put them in a centralized team. You could ignore them and tell your engineers to write their own docs. Or you could try to build a system that does the writing for you.

We are currently living through the fourth option. We have the technology to generate documentation automatically, and we seem to be having trouble figuring out how to make it actually work.

The problem is not the generation itself. The problem is the trigger. Documentation dies in the repository because there is no forcing function to keep it alive. Merge requests ship, features go live, and the documentation update either doesn't happen or becomes a JIRA ticket that lives in the backlog forever. Research on documentation drift confirms this is not a discipline problem but a structural one: when documentation lacks the equivalent enforcement that CI pipelines apply to code, it decouples from the codebase and accumulates debt that compounds over time.

The answer is to tie the documentation update to the merge event itself. If the code merges, the documentation pipeline runs. Engineers don't have to remember anything.

Anyway. Here is how to actually build that in GitLab.

How the Trigger Actually Works

GitLab provides several mechanisms to intercept a merge event and route it to a documentation system. The right choice depends on how much structure your team is willing to tolerate.

The most direct approach is a CI/CD pipeline job scoped to the default branch. You configure a job in .gitlab-ci.yml using the rules keyword, checking that $CI_PIPELINE_SOURCE == "push" and $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH. This job runs every time a merge request lands on main. It requires zero behavioral changes from engineers. If the code merges, the pipeline runs, and the pipeline can do anything: regenerate an OpenAPI spec, rebuild a static docs site, push a changelog entry to an external system.

Pipelines execute code. If you need to push the event data to an external system, you need webhooks. GitLab webhooks fire on merge request events at the project or group level. When a merge request is closed or merged, GitLab sends an HTTP POST payload containing the author, the labels, the description, and the state. This is how you route merge events to an external documentation engine, or how you trigger a Slack notification to a writer who needs to review the output.

The limitation of webhooks is that they push what they have. If you need more context — the specific files changed, the diff, the commit messages — you call back into the GitLab Merge Requests API. The changes[ ] array in the API response details every file modified, added, or deleted. A team that regenerates API documentation whenever a file in /api is touched is likely using a webhook to catch the merge and an API call to check the diff before deciding whether to run the doc generation job.

For teams that want to parse structured information from the merge request description itself, GitLab merge request templates let you define a required format. The CI pipeline can then read the MR description via the API, extract the structured fields, and route them to the appropriate documentation workflow.

The Part Everyone Gets Wrong

Automated triggers only work if engineers give the system something to work with.

If the merge request description is empty, or the commit message says "fix," no amount of pipeline logic will generate useful documentation. Research on commit message quality consistently shows that low-quality messages impede code comprehension and correlate with higher defect rates. The same principle applies to documentation: garbage in, garbage out, except the garbage is invisible until a customer asks why the changelog says nothing about the feature they just found.

The real challenge is not the trigger mechanism. It is ensuring that the information needed for documentation is captured at the point where the engineer actually has context.

The most effective teams solve this by gating the merge. They use merge request templates with a required "User-Facing Changes" section. They enforce Conventional Commits, requiring every commit to be prefixed with feat:, fix:, or docs:. They use approval rules to block merges that touch user-facing code but lack a docs:required label. GitLab's own Changelog API depends on this: it reads commit trailers to categorize changes, and if the trailers aren't there, the generated changelog is empty.

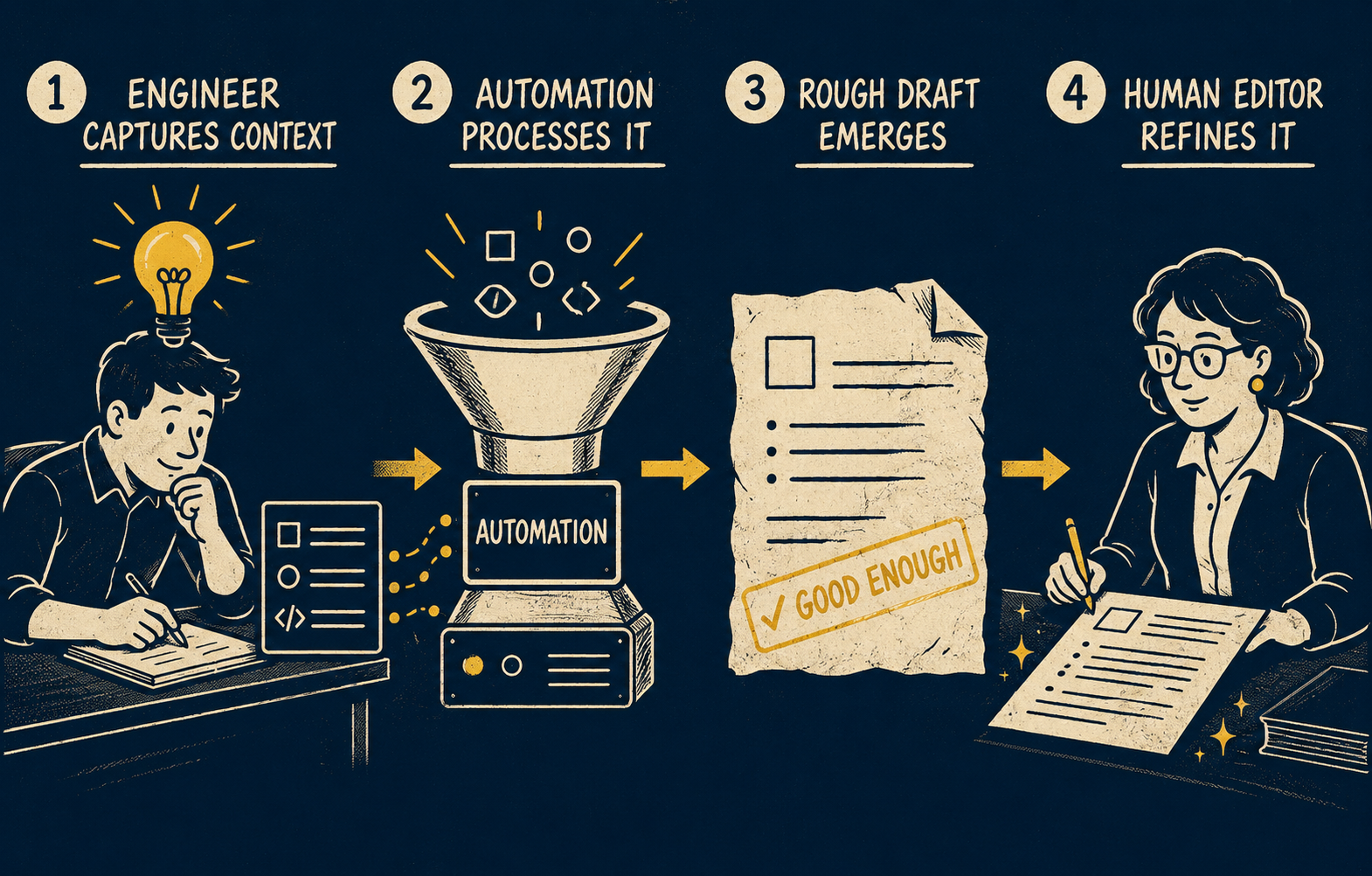

These constraints force the engineer to provide structured input at the moment they have the most context. The automation then takes that structured input and turns it into a draft. The draft is not perfect. That is fine. The draft is not supposed to be the final artifact; it is supposed to be the starting point that a writer or senior engineer reviews and publishes.

This is the distinction that most automation discussions skip. They describe the trigger and the generation and then imply the output is ready to ship. It rarely is. Unvalidated automation produces documentation that is technically current but functionally useless: accurate timestamps on changes no one understands, changelogs that list every refactored variable as a feature, API references that describe the parameters but not the behavior.

What Lean Teams Should Actually Build

If you have one technical writer or a senior engineer who owns docs part-time, their job should be validating and managing the output of automated systems, not manually rewriting changelogs for every merge.

The right setup is: engineer provides structured input in the MR (template, commit convention, label), automation generates a draft, human validates and publishes. This is not a novel idea. It is the same model that works for automated testing: the CI pipeline runs the tests, a human reviews the failures, and the system flags the edge cases that need attention.

The practical checklist for a lean team looks like this:

- Add a merge request template with a "User-Facing Changes" field and mark it required

- Enforce Conventional Commits via a linting step in the pipeline (tools like

commitlintintegrate directly with GitLab CI) - Add a CI job scoped to the default branch that reads the MR description and commit messages and generates a draft changelog entry

- Route that draft to a review queue rather than publishing it automatically

- Use a docs:skip label for internal refactors so the system knows not to generate a user-facing entry

The goal is not to eliminate human judgment. The goal is to eliminate the manual work that doesn't require judgment: pulling the diff, formatting the entry, routing it to the right place. The human's job is the part that actually requires knowing what the change means.

Teams that skip the review queue and publish automatically tend to discover the problem when a customer reads a changelog entry that says "Refactor authentication middleware for performance" and asks what that means for their integration. It means nothing, probably. But now someone has to explain that.

This is what the system is actually trying to produce: release notes, changelogs, and API references that reflect what shipped and that someone with context has validated. Doc Holiday generates initial artifact drafts from the engineering workflow, taking the structured input from your GitLab merge requests and commits and producing first drafts that a senior writer or engineer can review in a dashboard before publishing. For lean teams, that is the difference between documentation that stays current and documentation that dies in the backlog.