How to Auto-generate API Documentation From Github Commits

A developer merges a PR that removes a required parameter from a payment endpoint. The change ships. The docs still list that parameter as required. Somewhere, a developer on the other side of the world is building an integration right now, following those docs, and wondering why their calls keep failing.

This is not an edge case. According to research by APIContext across 650 million API calls, 75% of production APIs have variances from their published specifications. More than half of API specs haven't been updated in six months. The gap between what ships and what's documented is the default state of most engineering teams, not an exception.

The appeal of generating docs directly from commits is obvious. The commit already describes what changed. The diff shows exactly what moved. If you can extract that information automatically and push it into your documentation, you close the gap without adding headcount.

That's achievable. But it requires clean input, the right tooling, and a realistic understanding of what a commit can and cannot tell you.

What a Commit Actually Knows

When an API changes, the git diff and the associated commit message contain specific, structured information. Automated tools can reliably extract endpoint additions and removals, parameter changes (new fields, removed fields, type changes), authentication requirement shifts, and payload structure modifications. Tools like oasdiff can analyze OpenAPI specifications across commits and detect these changes, categorizing them by severity and flagging breaking changes against hundreds of rules.

That's the good news.

The bad news is that commits are structurally silent on everything else. They don't explain why a breaking change was necessary. They don't describe migration paths. They don't provide the usage context that makes a reference doc actually useful to someone building against your API. A commit that says remove deprecated auth_token param tells you what happened; it doesn't tell you what to do about it if you were relying on that parameter.

Research mapping 97 studies on commit messages and software maintenance found that while commit messages carry crucial information for understanding code changes, they often lack the intent and context needed to convey the full meaning of a change to future readers. The most useful artifacts in combination with commit messages are the diffs themselves — which confirms that automated extraction works best for structural changes, not narrative explanation.

There's also a prerequisite that teams often skip: this approach only works if your team writes useful commit messages. Single-word commits (fix, update, wip) produce useless documentation. The Conventional Commits specification provides a lightweight standard for structured commit messages that automation can actually parse — types like feat, fix, and BREAKING CHANGE give tooling the signal it needs to categorize changes correctly. If your team isn't writing commits at that level of specificity, fix that first. The automation is downstream of the input quality.

Three Ways to Wire This Up

The simplest approach is a webhook that fires on merge to your main branch. GitHub sends a payload with the commit data, a separate service parses the diff against your OpenAPI spec, and the documentation updates. This works, but it requires maintaining an external service to handle the webhook payloads, which adds operational overhead.

GitHub Actions is the more integrated option. You define a workflow that triggers post-merge, checks out the code, validates the OpenAPI spec, generates the documentation, and deploys it. A workflow using Redocly CLI can lint the spec before building (so broken specs don't deploy), generate static HTML, and push to GitHub Pages in a single pipeline. The key configuration is scoping the trigger to changes in the spec file itself, so documentation builds don't run on every commit to the repo.

YAML

on:

push:

branches:

- main

paths:

- 'spec/openapi.yaml'That path filter alone eliminates most unnecessary builds.

For changelog generation specifically, tools like Bump.sh automate the changelog update with each new release of an API document. Each deployment generates a new changelog entry summarizing the key changes since the previous one, with color-coded visual indicators for additions, modifications, and deletions. Breaking changes surface at the top of the entry. Consumers can subscribe to weekly summaries via email or RSS.

The SmartBear State of API report found that 62% of teams cite limited time as the biggest obstacle to keeping documentation up to date, and 47% flag documentation being out of sync as a key problem. These pipelines address both directly: they remove the manual step and they close the lag between shipping and documenting.

The Part That Breaks When You Skip It

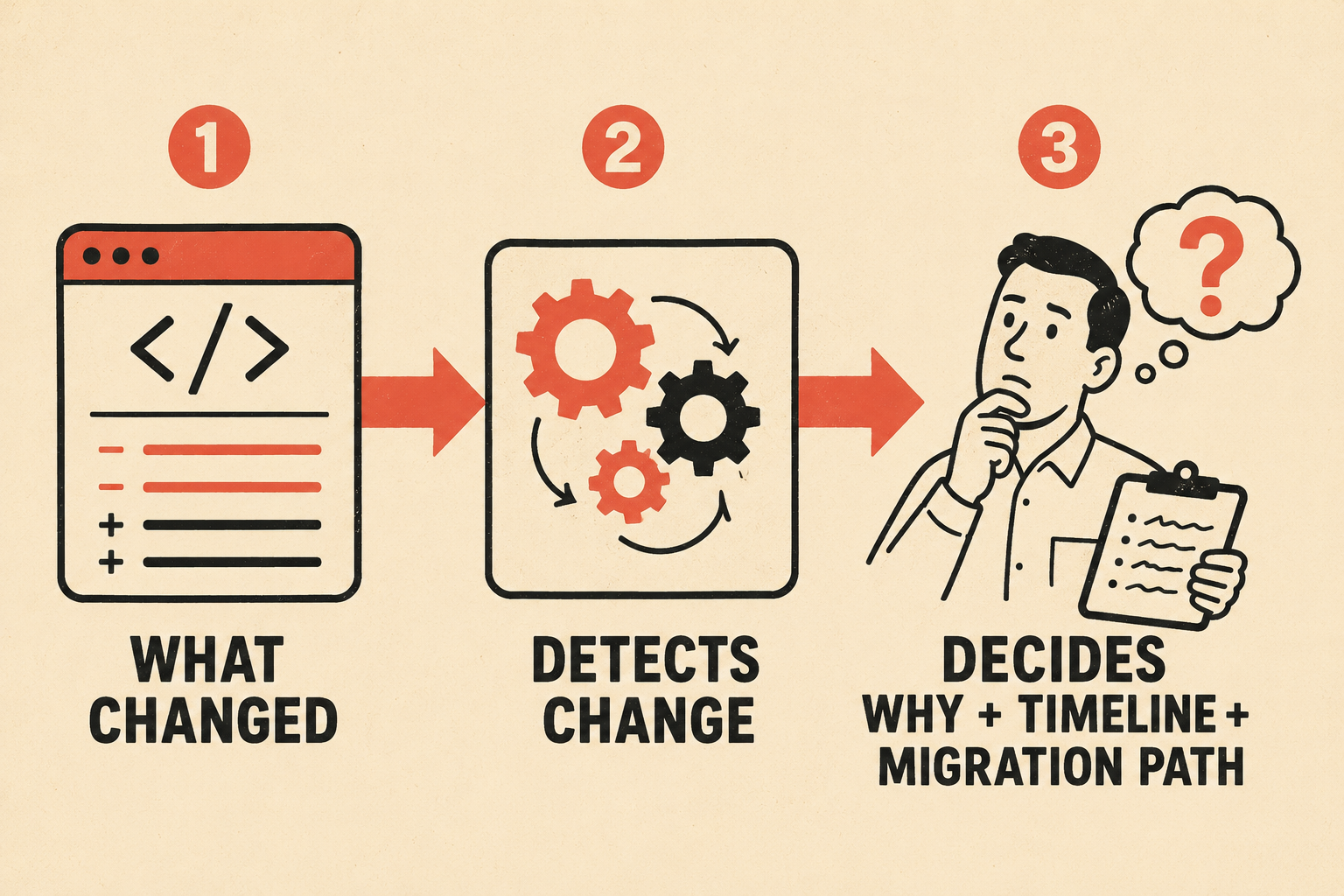

Breaking changes need a plan that commits alone won't give you.

When a parameter is removed or a response schema changes, the automated system can detect and flag it. What it can't do is decide how to communicate that change to consumers, set a deprecation timeline, or write the migration guide. Those decisions require a human.

The RFC 8594 Sunset HTTP header provides a standard mechanism for signaling that an endpoint will become unavailable at a specific date. Pairing the Sunset header with a Deprecation header gives consumers both the status and the timeline. Your automated documentation pipeline should parse these headers from the OpenAPI spec and surface them prominently in the generated docs — but someone has to set those headers in the first place, which means encoding the deprecation decision into the workflow before the automation can do anything with it.

Versioning follows the same logic. The freeCodeCamp tutorial on GitHub Actions and OpenAPI covers multi-version documentation — maintaining separate spec files for v1, v2, and v3, each with its own validation and deployment step. This is the right pattern. Old versions stay accessible, clearly marked, while the current version is the default. The pipeline handles the mechanics; the team decides when to cut a new version and when to archive an old one.

The APIDiff research from SANER 2018 showed that automated tools can reliably identify breaking and non-breaking changes between library versions hosted on git repositories. The detection is solid. The gap is always in the human layer: deciding what to do with the detected change, communicating it clearly, and giving consumers enough runway to adapt.

Anyway. The pipeline is not the hard part. The hard part is the input quality and the validation layer.

What This Actually Looks Like When It Works

The teams that get this right share a few characteristics. Their engineers write structured commit messages. Their PRs include descriptions that explain the why, not just the what. They have someone (a developer, a tech lead, a documentation owner) who reviews the auto-generated output before it publishes, adds the missing context, and catches the cases where the automation produced something technically accurate but practically useless.

The SmartBear data shows that 36% of teams already use an API definition like OpenAPI to automate documentation creation. That's the foundation. The commit-driven pipeline is the layer on top that keeps the spec in sync with what's actually shipping.

Speed and consistency come from the automation. Accuracy and judgment come from the person reviewing the output. Those two things aren't in tension; they're the point.

Doc Holiday generates API references, changelogs, and endpoint documentation directly from engineering workflows, then gives you the structure to review, validate, and manage that output without rebuilding a documentation team from scratch. If your commits are clean and you have someone who can own the validation step, the lag between shipping and documenting gets very short, very fast.